Docker is the most popular virtualization environment to deliver software in containers. In this post we review the basics of working with Docker to lay the foundation for "dockerizing" data applications.

Containers provide portability, consistency, and are used for packaging, deploying, and running cloud-native data science applications. Docker is the most popular virtualization environment to deliver software in containers. Docker is also well supported by the R community. Among the many use cases, Docker is most commonly used to deploy reproducible workflows and to provide isolation for Shiny apps. In this post, we review the basics of working with Docker to lay the foundation for "dockerizing" data applications coming up in the next post.

What is Docker

Note: this post relies heavily on the Docker documentation to provide an easy-to-reference summary of the main terms and concepts.

Docker is an open platform for developing, shipping, and running applications. Docker enables you to separate your applications from your infrastructure so you can deliver software quickly. With Docker, you can manage your infrastructure in the same ways you manage your applications. – Docker documentation

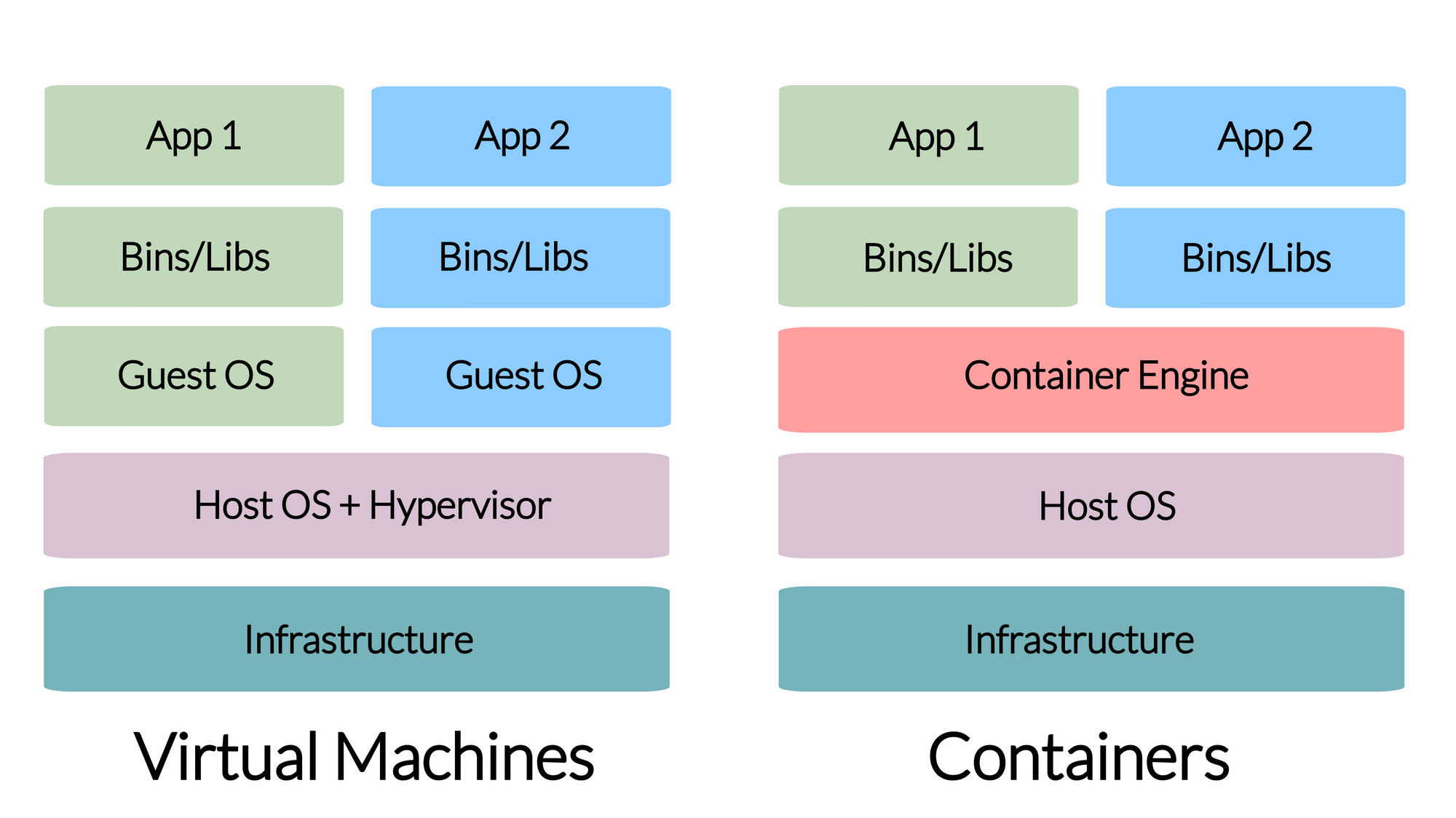

Containers bundle their own software, libraries and configuration files and are isolated from one another. Containers are the run-time environments or instances defined by container images.

Install Docker

To download and install Docker for your platform of choice, visit the Docker download page and follow the instructions.

Docker concepts

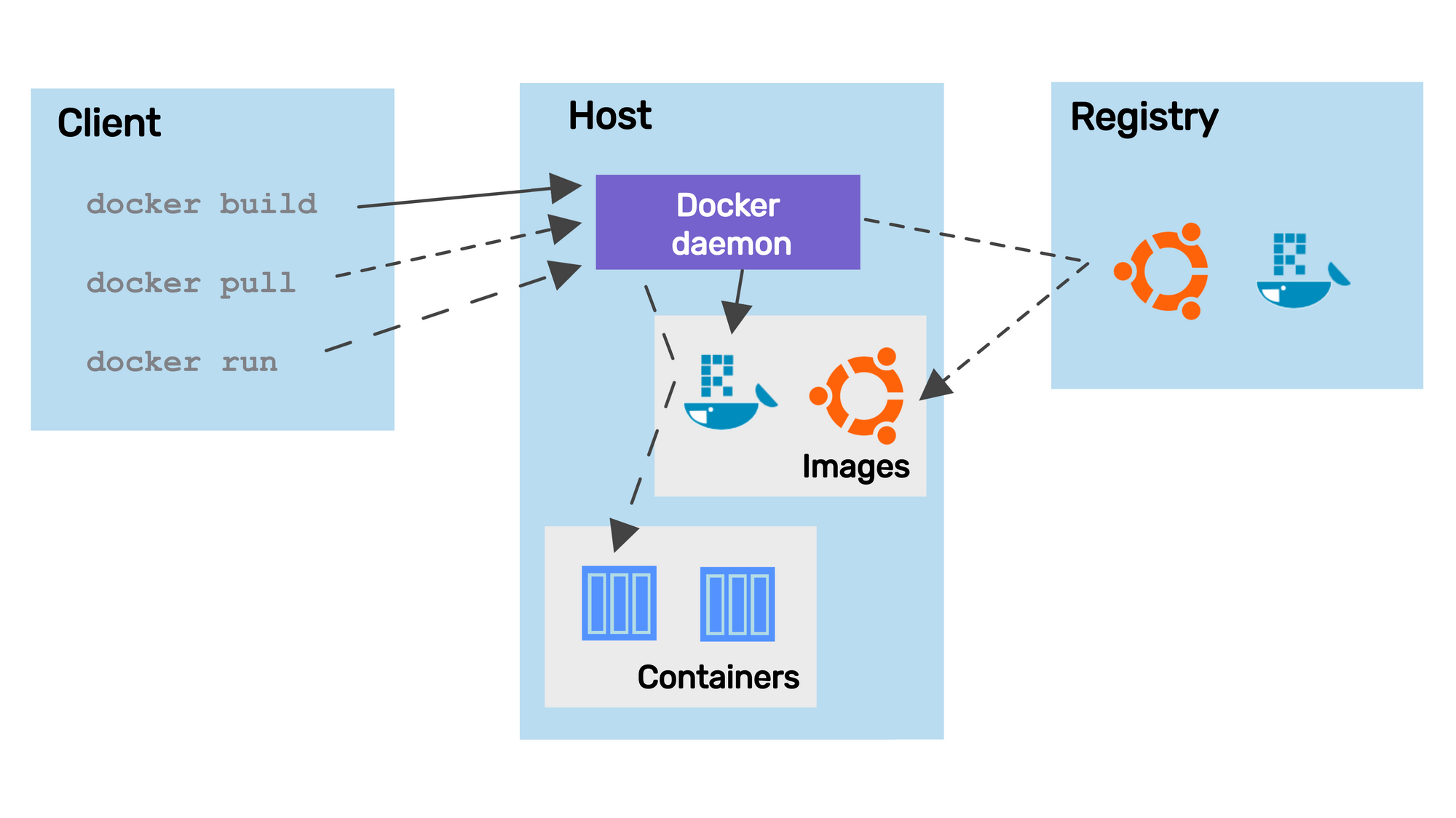

Let's review the most important concepts. The image below illustrates how all the Docker-related concepts all fit together:

Docker Engine

The Docker Engine is a client-server application that includes a server (a long-running daemon process that listens to API requests, dockerd), an application programming interface (REST API, specifies the interface that programs can use to talk to the daemon), and a command-line interface (CLI, the client-side, docker).

The CLI uses the REST API to control or interact with the Docker daemon. The daemon creates and manages Docker objects, such as images, containers, etc.

Docker registries

A Docker registry stores Docker images. Docker Hub is a public registry and Docker is configured to look for images on Docker Hub by default. There are many other registries, or users can have their own private registry.

Docker images

An image is a read-only template with instructions for creating a Docker container. An image can be based on another image with additional customization on top of this so-called base or a parent image.

Docker containers

A container is a runnable instance of an image. Users can create, start, stop a container using the Docker API or CLI. It is also possible to connect a container to networks or attach storage to it.

By default, a container is isolated from other containers and the host machine. The degree of isolation can be controlled by the user and depends on whether it is connected to networks, storage, other containers, or the host machine.

Dockerfile

Docker builds images by reading the instructions from a Dockerfile. A Dockerfile is a text document that contains all the commands to assemble an image using the docker build CLI command. You will learn more about the Dockerfile as part of the worked Shiny example later.

CLI commands

The most common Docker CLI commands are:

docker login: log into a Docker registrydocker build: build a Docker image based on aDockerfiledocker push: push a locally built image to a Docker registrydocker pull: pull an image from a registrydocker run: run a command in a new container based on an image

You will learn more about these commands in an upcoming post.

Summary

Learning Docker seems daunting at first, but it is an incredibly powerful piece of technology once you get the hang of it. It is also the building block of the modern web.